Bare-Metal Kubernetes HA: Floating IPs Over BGP With Cilium and UniFi's UCG-Fiber

Some parts of this post were co-authored and proof-read by an LLM. Every config, command, and claim in here was verified against the live cluster - the corrections are mine.

Most Kubernetes HA stories start with a managed load balancer in the cloud. Mine doesn't have one. My cluster consists of three (quite well spec'd) Minisforum MS-01 mini PCs in a 3D-printed rack sitting in my home office, behind a residential ISP, with a UniFi Cloud Gateway (UCG-Fiber) as the only piece of L3 between me and the internet (not counting the cable modem though). The nodes are running as control-plane and worker node at the same time. So when kubectl started timing out the moment a single node went down (while I patched the worker nodes for CopyFail), the answer wasn't just "point your kubeconfig at one of the remaining nodes". It was "finally configure BGP on the gateway, let Cilium do the rest". Well.. sort of. I already had BGP running for the application traffic, now I wanted to have it for the Kube API traffic too.

This post is the full how-to. Two floating LoadBalancer IPs, one for application ingress through Traefik, one for the Kubernetes API server through cilium-envoy with active TCP health checks. Plus the Cilium 1.19 quirk that silently breaks the apiserver path - a five-line YAML change that took the longest to find.

My 3D-printed rack with the three nodes and a SFP+ switch at the top

My 3D-printed rack with the three nodes and a SFP+ switch at the top

Why BGP At All

There is no L4/L7 load balancer in front of my cluster. MetalLB in ARP mode would work, but the UCG-Fiber can speak BGP - so why fall back to the L2 hack? An HAProxy or NGINX VM in front would just be another single-node single point of failure, so nope.

What's left is the obvious answer: the UniFi Gateway does the fail-over. Cilium's BGP control plane advertises a /32 for each LoadBalancer Service to the upstream router. The router ECMP's incoming traffic across all the cluster nodes that hold the route. Add a node, the route gets a new next-hop. Remove a node, the route loses one. The failure domain is the router itself - which is the failure domain anyway in any setup that has a router at all.

Cilium ships everything you need: the BGP speaker, an LB IPAM controller, and (since 1.16) a clean way to plug cilium-envoy in front of any Service. Wiring them up correctly for both ingress traffic and the apiserver was a two-evening project, and one of those evenings was the gotcha at the end of this post.

What We're Building

Two LoadBalancer IPs from a predefined Cilium-managed pool (in my case: 10.31.0.80/28):

10.31.0.80→ cilium-envoy → kube-apiserver, with active TCP health checks for a quick failover10.31.0.81→ Traefik → all applications HTTPRoute's

⠀⠀⠀⠀⠀⠀⠀⠀⠀Internet

│

▼

┌───────────────────────────┐

│ UCG-Fiber (BGP peer) │ AS 64512, FRR

│ 10.31.0.1 │

└─────────────┬─────────────┘

│ BGP (eBGP, multihop=1)

│ advertises: 10.31.0.80/28 LB pool

▼

┌───────────────────────────┐

│ TrendNet TL2-F7120 │ L2 switch, 3× LACP groups

└─────┬─────────┬─────────┬─┘

│ │ │

LACP│ LACP│ LACP│ 2×10G SFP+ per node

▼ ▼ ▼

┌──────┐ ┌──────┐ ┌──────┐

│ cat01│ │ cat02│ │ cat03│ 3× HA control plane

│.218 │ │.161 │ │.132 │ Ubuntu 24.04 / k3s

└──────┘ └──────┘ └──────┘ bond0 = 20 Gbit/s

Cilium 1.19.3 + cilium-envoy on every node

├── CNI (vxlan tunneled pod network)

├── kube-proxy replacement (eBPF socketLB)

├── BGP control plane → UCG

├── LB IPAM pool 10.31.0.80/28 (16 VIPs)

└── L7 proxy via cilium-envoy DaemonSetNote: To maintain some simplicity I left out a few components.

Versions used here: Cilium 1.19.3, k3s v1.35.1+k3s1, Traefik 39.0.7. Ubuntu 24.04 on every node. Everything else in the cluster is also irrelevant for this post.

Repo Layout: Ansible Is Infra, Helm Is Overlay

I keep two repos for this cluster, and the split matters for the rest of this guide.

provisioning/infra is Ansible-managed bootstrap. Anything that depends on inventory, host state, or cluster-level config the cluster doesn't yet have lives here: the k3s install, kernel/sysctl/HugePages tuning, the Cilium Helm install, BGP custom resources, the FRR config on the UCG, and the kube-apiserver-bgp Service+EndpointSlice+CCEC. The last one belongs in Ansible because the EndpointSlice's node IPs come straight from the inventory and the CRDs only exist after Cilium is installed.

app-of-apps is the ArgoCD-managed runtime overlay: Traefik, cert-manager, external-dns, every application HTTPRoute and Middleware, all the Argo Applications themselves. Anything that re-converges cleanly via kubectl apply (using ArgoCD) on a cluster.

The rule I use: bootstrap goes to Ansible, runtime goes to app-of-apps. Both are public-ish (secrets are referenced through Infisical, not committed) and both get diff-reviewed before merge. The Argo side adopts ownership of the BGP CRs after the cluster is up - Ansible applies them once at install time, then ArgoCD takes over via tracking annotations and Ansible's role becomes a no-op on subsequent runs. That's the cleanest way I've found to break the chicken-and-egg of "Argo can't run because the cluster isn't reachable, but the CRs Argo would deploy are what makes the cluster reachable".

Every code block below comes from one of these files. Each block has the path on the line above it. Here's the trimmed tree of files this post actually shows:

provisioning/infra/playbooks/

├── group_vars/k3s.yml # tunables (ASNs, TLS SAN list)

├── inventory/hosts.yaml # node IPs and groups

└── roles/

├── ucg_bgp/

│ ├── defaults/main.yml # router-side ASNs, peer IPs

│ ├── templates/frr-bgp.conf.j2 # FRR config (jinja)

│ └── tasks/main.yml # vtysh apply + write memory

├── cilium/

│ ├── templates/cilium-values.yaml.j2 # Helm values

│ ├── templates/cilium-bgp-config.yaml.j2 # the four BGP CRs

│ └── templates/kube-apiserver-bgp.yaml.j2 # HA API path

└── k3s_server/templates/k3s-config.yaml.j2 # k3s flags + tls-san

app-of-apps/platform/

└── values.yaml # Helm values root (Traefik etc.)The Network Underneath

Two VLANs.

VLAN 1 (10.42.0.0/24) is the management plane: PXE / cloud-init / IPMI / admin SSH only. Nothing user-facing touches it.

VLAN 300 (10.31.0.0/24) is the cluster plane and the BGP peering segment. Node IPs, the LoadBalancer pool (10.31.0.80/28), and the UCG itself (10.31.0.1) all live here. Default gateway for the cluster nodes is the UCG.

Each node has two 10G SFP+ NICs bonded LACP-style (mode 802.3ad, layer3+4 hash, fast lacp-rate) for 20 Gbit/s aggregate. The bond carries VLAN 300 untagged. The switch is a TrendNet TL2-F7120 with three port-channel groups (Gi0/1+2, Gi0/3+4, Gi0/5+6), one per node, with port-channel load-balance src-dest-ip so the bond actually uses both links instead of pinning each flow to a single port.

configure terminal

port-channel load-balance src-dest-ip

interface Gigabitethernet 0/1

channel-group 1 mode active

exit

interface Gigabitethernet 0/2

channel-group 1 mode active

exit

... # (repeat for groups 2 and 3)

copy running-config startup-configHow the nodes get to running OS in the first place - PXE off netboot.xyz, cloud-init pulled from cloudinit.clustername.io, Ubuntu 24.04 from versioned netboot squashfs - is its own story. It's not in this post. Assume three Ubuntu boxes with k3s installed and the bond up.

BGP Between Cluster and Router

Two ends to configure: the router (FRR running on the UCG) and the cluster (Cilium's BGP control plane). Both managed from Ansible.

The Router Side

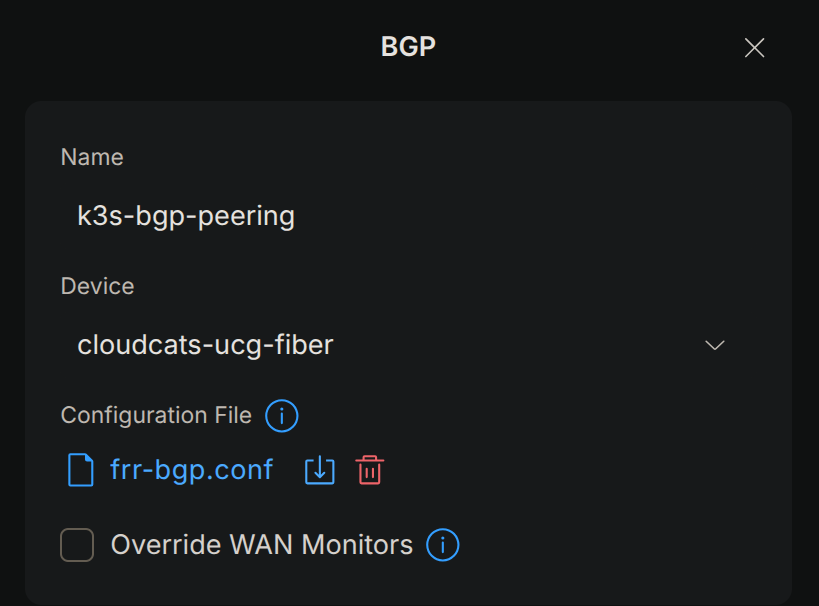

Two clicks in the UniFi controller before any of this works. Note: You could also use the GUI, but I want to have it in configuration management as well.

Enable SSH on the gateway. Settings → Console → SSH. Set a password or, better, an SSH key. The Ansible role logs in as root over SSH and runs vtysh, so this isn't optional.

Enable Dynamic Routing. Settings → Routing → BGP (or, depending on the UniFi UI version, Policy Engine → Dynamic Routing → BGP). Toggle it on. This is what makes the UCG load FRR. Without this toggle, vtysh doesn't works on the gateway and the role fails immediately.

Settings → Routing in the UniFi controller. Toggle Dynamic Routing on, BGP becomes available. Required before the gateway will accept any FRR config.

Settings → Routing in the UniFi controller. Toggle Dynamic Routing on, BGP becomes available. Required before the gateway will accept any FRR config.

router bgp {{ ucg_bgp_router_asn }}

bgp router-id {{ ucg_bgp_router_id }}

no bgp ebgp-requires-policy

no bgp default ipv4-unicast

!

neighbor k3s-nodes peer-group

neighbor k3s-nodes remote-as {{ ucg_bgp_cluster_asn }}

{% for neighbor_ip in ucg_bgp_neighbors %}

neighbor {{ neighbor_ip }} peer-group k3s-nodes

{% endfor %}

!

address-family ipv4 unicast

neighbor k3s-nodes activate

neighbor k3s-nodes soft-reconfiguration inbound

exit-address-family

!The variables - with the neighbor list pulled straight from inventory:

ucg_bgp_router_asn: 64512

ucg_bgp_router_id: "10.31.0.1"

ucg_bgp_cluster_asn: 65000

ucg_bgp_neighbors: "{{ groups['k3s_server'] | map('extract', hostvars, 'ansible_host') | list }}"The role applies the rendered file with two vtysh calls - one to load it, one to commit it to FRR's own running config:

- name: Apply FRR BGP configuration

ansible.builtin.command: vtysh -f /tmp/frr-bgp.conf

- name: Save FRR configuration

ansible.builtin.command: vtysh -c "write memory"write memory is what makes the config survive a reboot - FRR persists its running config to disk. UniFi firmware updates do not nuke it; the BGP toggle in the UI is what would nuke it, and that's a deliberate human action.

After running the role, cat /etc/frr/frr.conf on the gateway shows the BGP block FRR is actually using - rendered, with the ! separators in the Jinja stripped by FRR's own writer:

router bgp 64512

bgp router-id 10.31.0.1

no bgp ebgp-requires-policy

no bgp default ipv4-unicast

neighbor k3s-nodes peer-group

neighbor k3s-nodes remote-as 65000

neighbor 10.31.0.218 peer-group k3s-nodes

neighbor 10.31.0.161 peer-group k3s-nodes

neighbor 10.31.0.132 peer-group k3s-nodes

address-family ipv4 unicast

neighbor k3s-nodes activate

neighbor k3s-nodes soft-reconfiguration inbound

exit-address-family

After the toggles are on, the Ansible role pushes the config.

Three FRR-isms worth knowing: no bgp default ipv4-unicast keeps unicast off until you turn it on inside the address-family block (otherwise FRR auto-activates everything for every neighbor and you fight defaults). The peer-group is just a template for shared settings across all three nodes. soft-reconfiguration inbound makes route refreshes cheap if you change policy later - costs a bit of RAM, doesn't matter at this scale.

root@clustername-ucg-fiber:~# vtysh -c "show ip bgp summary"

IPv4 Unicast Summary:

BGP router identifier 10.31.0.1, local AS number 64512 VRF default vrf-id 0

BGP table version 11

RIB entries 3, using 384 bytes of memory

Peers 3, using 71 KiB of memory

Peer groups 1, using 64 bytes of memory

Neighbor V AS MsgRcvd MsgSent TblVer InQ OutQ Up/Down State/PfxRcd PfxSnt Desc

10.31.0.132 4 65000 425746 425736 11 0 0 01:47:44 2 2 N/A

10.31.0.161 4 65000 425747 425739 11 0 0 01:47:57 2 2 N/A

10.31.0.218 4 65000 425747 425739 11 0 0 01:47:56 2 2 N/A

Total number of neighbors 3vtysh -c 'show ip bgp summary' on the UCG. All three nodes in Established state, 2 prefixes received - one per LoadBalancer VIP.

Install Cilium With the BGP Control Plane

The Cilium Helm values file is small - everything that matters for this post fits in ten lines:

operator:

replicas: 1

bgpControlPlane:

enabled: true

kubeProxyReplacement: true

envoyConfig:

enabled: trueDissecting it:

bgpControlPlane.enabled: true- installs the Cilium BGP CRDs and the operator-side controller that reads them.kubeProxyReplacement: true- replaces kube-proxy entirely with Cilium's eBPF socketLB. Required not just for performance but because the CCEC in the apiserver section below relies on socketLB to redirect Service traffic into cilium-envoy. Without this, hand-rolled CCECservices:bindings are silently a no-op. (Migration order matters - see Pitfalls.)envoyConfig.enabled: true- installs theCiliumEnvoyConfigandCiliumClusterwideEnvoyConfig(CCEC) CRDs, plus the operator controller that pushes them into Envoy via xDS.

The Ansible role drops the values file at /tmp/cilium-values.yaml and runs:

helm upgrade --install cilium cilium/cilium \

--version 1.19.3 \

--namespace kube-system \

--values /tmp/cilium-values.yaml \

--kubeconfig /etc/rancher/k3s/k3s.yamlThen a rolling restart of daemonset/cilium and deployment/cilium-operator to pick up the new config.

The Four BGP Custom Resources

All four resources live in the same templated file. I'll quote them in dependency order.

apiVersion: cilium.io/v2

kind: CiliumLoadBalancerIPPool

metadata:

name: bgp-pool

spec:

blocks:

- cidr: "10.31.0.80/28"The pool. Defines the range of VIPs that Cilium can hand out to LoadBalancer Services. 10.31.0.80/28 gives me 16 IPs (14 usable). That's plenty for a homelab - I currently use two.

apiVersion: cilium.io/v2

kind: CiliumBGPClusterConfig

metadata:

name: cilium-bgp

spec:

nodeSelector:

matchLabels:

node.kubernetes.io/instance-type: bare-metal

bgpInstances:

- name: k3s-bgp

localASN: 65000

peers:

- name: ucg-fiber

peerASN: 64512

peerAddress: "10.31.0.1"

peerConfigRef:

name: ucg-fiber-peerThe ClusterConfig says which nodes peer (the bare-metal label selector matches all three k3s nodes), the cluster's local ASN, and one or more peer entries. Each peer references a CiliumBGPPeerConfig by name for the rest of its settings.

apiVersion: cilium.io/v2

kind: CiliumBGPPeerConfig

metadata:

name: ucg-fiber-peer

spec:

timers:

holdTimeSeconds: 15

keepAliveTimeSeconds: 5

gracefulRestart:

enabled: true

restartTimeSeconds: 120

families:

- afi: ipv4

safi: unicast

advertisements:

matchLabels:

advertise: bgpThe PeerConfig holds the timers, the address families, and - crucially - the advertisements: selector that picks up matching CiliumBGPAdvertisement objects by label. 5/15s keepalive/hold means the session detects a dead peer in well under 30s.

apiVersion: cilium.io/v2

kind: CiliumBGPAdvertisement

metadata:

name: lb-services

labels:

advertise: bgp

spec:

advertisements:

- advertisementType: Service

service:

addresses:

- LoadBalancerIP

selector:

matchExpressions:

- key: app.kubernetes.io/name

operator: ExistsThe Advertisement is what gets advertised. Type Service, address LoadBalancerIP, and a Service selector. The selector here says "any Service with the app.kubernetes.io/name label, regardless of value". That's because every chart-installed Service in this cluster sets that label by default - it's the closest thing to "advertise everything that's a real Service" without ever advertising Cilium-internal stuff. If you write your own LoadBalancer Service, remember to add the label or the IP won't reach the BGP wire.

The chain in one line: Pool → ClusterConfig → PeerConfig → Advertisement → matching Service label = your VIP appears on the wire.

Bootstrap order matters. Ansible applies these CRs during the cilium role with kubectl apply --server-side. After ArgoCD comes online, the cilium-bgp-custom-resources Helm chart in app-of-apps adopts ownership through Argo's tracking annotations - subsequent Ansible runs are idempotent against what Argo manages. This avoids the "Argo can't deploy them because BGP isn't up so Argo isn't reachable" deadlock you'd otherwise hit on a fresh cluster.

Verify the Session

Three commands, three places:

# Cluster side

kubectl -n kube-system exec ds/cilium -c cilium-agent -- cilium-dbg bgp peers

# Router side

ssh root@10.31.0.1 'vtysh -c "show ip bgp summary"'

ssh root@10.31.0.1 'vtysh -c "show ip bgp"'

# IPAM

kubectl get ciliumloadbalancerippool bgp-pool -o jsonpath='{.status.conditions}'cilium-dbg bgp peers should show three entries (one per node) all in established state. The UCG side should show three peers and as many prefixes as you have LoadBalancer Services with the right label. The IPAM pool's status conditions should report IPAMRequestSatisfied=True for any Service that has pulled an IP.

for pod in $(kubectl -n kube-system get po -l k8s-app=cilium -o name); do

node=$(kubectl -n kube-system get $pod -o jsonpath='{.spec.nodeName}')

echo "--- $node ---"

kubectl -n kube-system exec $pod -c cilium-agent -- cilium-dbg bgp peers

done

--- cat01 ---

Local AS Peer AS Peer Address Session Uptime Family Received Advertised

65000 64512 10.31.0.1:179 established 4h55m38s ipv4/unicast 2 2

--- cat02 ---

Local AS Peer AS Peer Address Session Uptime Family Received Advertised

65000 64512 10.31.0.1:179 established 4h55m52s ipv4/unicast 2 2

--- cat03 ---

Local AS Peer AS Peer Address Session Uptime Family Received Advertised

65000 64512 10.31.0.1:179 established 4h55m52s ipv4/unicast 2 2cilium-dbg - BGP peers are showing three established sessions! All nodes peered with the UCG and the route counters going up.

Floating IP for Application Traffic

This part is short. The pattern from the previous section just works for any LoadBalancer Service - including Traefik. Three things to set up:

Pin the IP

Add the lbipam.cilium.io/ips annotation to the Service so Cilium hands it the same VIP every time:

service:

type: LoadBalancer

annotations:

"lbipam.cilium.io/ips": "10.31.0.81"You can let Cilium pick whatever's free, but kubeconfigs and DNS records both depend on the IP being stable across LoadBalancer re-creates. Therefore pin it to avoid external-dns recreating records, if any.

Match the Advertisement Selector

The Advertisement above selects on app.kubernetes.io/name Exists. Traefik's Helm chart sets that label by default. Worth checking when you write your own LoadBalancer Service - if the label isn't there, the route never makes it to BGP and you'll waste time wondering why the IP doesn't ping from the outside.

The Traefik Helm Values

The full Traefik values block, with bits unrelated to this post stripped out:

traefik:

enabled: true

values:

ingressClass:

isDefaultClass: true

deployment:

kind: DaemonSet

service:

type: LoadBalancer

annotations:

"lbipam.cilium.io/ips": "10.31.0.81"

gatewayClass:

enabled: true

gateway:

enabled: true

namespace: traefik

annotations:

cert-manager.io/cluster-issuer: letsencrypt-issuer

listeners:

web:

port: 8000

protocol: HTTP

hostname: "*.{{ .Values.baseClusterDomain }}"

namespacePolicy:

from: All

websecure:

port: 8443

protocol: HTTPS

hostname: "*.{{ .Values.baseClusterDomain }}"

namespacePolicy:

from: All

mode: Terminate

certificateRefs:

- kind: Secret

name: traefik-default-tls

websecure-clustername:

port: 8443

protocol: HTTPS

hostname: "*.clustername.io"

namespacePolicy:

from: All

mode: Terminate

certificateRefs:

- kind: Secret

name: traefik-clustername-tls

providers:

kubernetesIngress:

enabled: true

kubernetesCRD:

enabled: true

allowCrossNamespace: true

kubernetesGateway:

enabled: true

ports:

web:

http:

redirections:

entryPoint:

to: websecure

scheme: https

permanent: trueA few things that aren't obvious:

kind: DaemonSet- one Traefik replica per node. Combined with the BGP advertisement happening on every node too, this means every cluster node can serve the VIP locally. ECMP from the UCG distributes across all three by selecting a route.- Two HTTPS listeners on the same port (

8443). Each has its own hostname filter and its own cert reference, so cert-manager's gateway-shim issues two separate wildcard certs - one for*.dev.ber1.clustername.io, one for*.clustername.io. The HTTP listener does a permanent redirect to HTTPS at the entrypoint level. gatewayClass.enabled: true- this cluster uses Gateway API for app routing, not Ingress. The chart still ships anIngressClassso legacy charts that emit an Ingress keep working, but new things attach via HTTPRoute.

cert-manager and external-dns

Two short snippets that make the rest of this self-service.

cert-manager runs with three extra args:

# ...

certManager:

values:

extraArgs:

- --enable-gateway-api

- --dns01-recursive-nameservers-only

- --dns01-recursive-nameservers=10.43.0.10:53

# ...--enable-gateway-api is what actually starts the gateway-shim controller in cert-manager 1.18. The ExperimentalGatewayAPISupport feature gate has been default-on since 1.15, but the gate alone isn't enough - the dedicated flag is. The two recursive-nameserver args are a workaround for my ISP hijacking DNS UDP/53 (a different post, but the gist: route the DNS-01 propagation check through cluster CoreDNS, which forwards over DoT).

external-dns has gateway-httproute in its sources list:

# ...

externalDns:

values:

sources:

- ingress

- service

- gateway-httproute

# ...That single line is what makes a new HTTPRoute auto-publish its hostnames to Cloudflare without anyone touching DNS.

Wire Up an App via HTTPRoute

The simplest example in the cluster - application foobar, two hostnames, two backends:

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: foobar

namespace: foobar

spec:

parentRefs:

- name: traefik-gateway

namespace: traefik

sectionName: websecure

- name: traefik-gateway

namespace: traefik

sectionName: websecure-clustername

hostnames:

- "foobar.dev.ber1.clustername.io"

- "foobar.clustername.io"

rules:

- matches:

- path:

type: PathPrefix

value: /api

backendRefs:

- name: foobar-backend

port: 3000

- matches:

- path:

type: PathPrefix

value: /

backendRefs:

- name: foobar-frontend

port: 80Two parentRefs because the route attaches to two listeners (one per cert domain). Two path-prefix rules because the upstream chart splits /api to a backend Service and / to a frontend Service.

Verify

kubectl -n traefik get svc traefik

# EXTERNAL-IP should be 10.31.0.81

dig +short foobar.dev.ber1.clustername.io @1.1.1.1

# returns 10.31.0.81

curl -I https://foobar.dev.ber1.clustername.io/

# 200On the UCG side, the Traefik VIP shows up as a /32 with three next-hops, one per cluster node:

root@clustername-ucg-fiber:~# vtysh -c "show ip route bgp"

Codes: K - kernel route, C - connected, L - local, S - static,

O - OSPF, B - BGP, T - Table, f - OpenFabric,

t - Table-Direct,

> - selected route, * - FIB route, q - queued, r - rejected, b - backup

t - trapped, o - offload failure

B>* 10.31.0.80/32 [20/0] via 10.31.0.132, br300, weight 1, 02:13:09

* via 10.31.0.161, br300, weight 1, 02:13:09

* via 10.31.0.218, br300, weight 1, 02:13:09

B>* 10.31.0.81/32 [20/0] via 10.31.0.132, br300, weight 1, 05:13:18

* via 10.31.0.161, br300, weight 1, 05:13:18

* via 10.31.0.218, br300, weight 1, 05:13:18The Traefik VIP installed in the UCG's BGP RIB with three ECMP next-hops. Lose a node and the corresponding next-hop disappears within hold-time (15 s).

That's it for app traffic. The same pattern - LoadBalancer Service with the right label, IP pinned via lbipam annotation - applies to anything else you want on its own VIP.

Floating IP for the Kubernetes API

Same goal, different mechanics. The standard pattern from the previous section doesn't work for the Kubernetes API for two reasons:

- The apiserver isn't a Pod. In k3s it runs as part of the

k3s serverhost process on port 6443. There's nothing for a Service selector to match. - A plain LoadBalancer Service has no active health-checking. A node going dark would leave kubectl retrying on dead routes for ~1-2 s every time the next-hop chosen by the kernel happened to be the dead one. Acceptable maybe, but worse than what you'd get from a real LB.

So this part of the cluster gets a slightly more elaborate setup: a selectorless Service, a manually-managed EndpointSlice, and cilium-envoy in the path with active TCP health checks. All three live in one Ansible-templated file so the EndpointSlice pulls node IPs straight from inventory.

Add the BGP IP to the apiserver TLS SANs

Before anything else, the apiserver's serving certificate has to include the new VIP and the hostname you'll point kubeconfig at. The k3s config template reads the SAN list from group_vars:

# ...

k3s_tls_sans: >-

{{ (groups['k3s_server'] | map('extract', hostvars, 'ansible_host') | list)

+ ['10.31.0.80', 'kube.dev.ber1.clustername.io'] }}

# ...Three node IPs from inventory, plus the BGP VIP and the DNS name. Re-running the k3s play rolls each control-plane node (serial: 1 on the joiners) and k3s regenerates the apiserver serving cert with the new SANs.

The Service and EndpointSlice

The Service+EndpointSlice pair (the CCEC follows in a moment, all from the same template):

apiVersion: v1

kind: Service

metadata:

name: kube-apiserver-bgp

namespace: kube-system

labels:

app.kubernetes.io/name: kube-apiserver-bgp

annotations:

"lbipam.cilium.io/ips": "10.31.0.80"

"external-dns.alpha.kubernetes.io/hostname": "kube.dev.ber1.clustername.io"

spec:

type: LoadBalancer

ipFamilies: [IPv4]

externalTrafficPolicy: Cluster

ports:

- name: https

port: 6443

targetPort: 6443

protocol: TCP

---

apiVersion: discovery.k8s.io/v1

kind: EndpointSlice

metadata:

name: kube-apiserver-bgp

namespace: kube-system

labels:

kubernetes.io/service-name: kube-apiserver-bgp

addressType: IPv4

ports:

- name: https

port: 6443

protocol: TCP

endpoints:

- addresses: ["10.31.0.218"]

nodeName: cat01

conditions:

ready: true

- addresses: ["10.31.0.161"]

nodeName: cat02

conditions:

ready: true

- addresses: ["10.31.0.132"]

nodeName: cat03

conditions:

ready: trueA few lines matter here:

app.kubernetes.io/name: kube-apiserver-bgpis the label that makes the BGP advertisement select this Service.lbipam.cilium.io/ips: "10.31.0.80"pins the VIP. This IP becomes the kubeconfig server URL.external-dns.alpha.kubernetes.io/hostnamemakes external-dns publish the DNS A record for the hostname pointing at the VIP.externalTrafficPolicy: Cluster- notLocal. This is the gotcha that took me longest to find. (SettingLocalhere silently empties the EDS endpoints on non-control-plane nodes - see the Pitfalls section.)- The EndpointSlice is selectorless (the Service has no selector) and gets the

kubernetes.io/service-namelabel that binds it to the Service. Each entry hasnodeNameset so Cilium can correlate to a node identity.

The Jinja loops over groups['k3s_server'] - adding or removing a control-plane node is a one-line inventory change and a re-run of the cilium role.

Why CiliumClusterwideEnvoyConfig (CCEC) With Active Health Checks

If you stop here - just the Service plus EndpointSlice - it already works. Cilium advertises 10.31.0.80/32, the UCG ECMPs to all three nodes, and kube-proxy (or eBPF service map, since we have kpr=true) DNATs to one of the apiserver IPs. Failover happens through TCP retry - kubectl hits a dead node, gets connection refused or a timeout, retries against another. Visible pause, but functional.

cilium-envoy in front gives you much quicker failover though. Active TCP probes mark a dead apiserver unhealthy in ~3 seconds (unhealthy_threshold: 3 × interval: 1s); existing kubectl watch streams survive on long idle timeouts; new connections only land on healthy backends. That's the whole reason for the extra component.

The prerequisite is Cilium replacing kube-proxy. kubeProxyReplacement: true in the Helm values (already set) plus disable-kube-proxy: true in k3s:

# ...

disable:

- traefik # because I'm deploying it seperately

- metrics-server # because I'm deploying it seperately

- servicelb

disable-kube-proxy: true

# ...(Order matters - apply the Cilium change first, then disable kube-proxy in k3s. See the Pitfalls section.)

The CiliumClusterwideEnvoyConfig (CCEC) Manifest

apiVersion: cilium.io/v2

kind: CiliumClusterwideEnvoyConfig

metadata:

name: kube-apiserver-bgp

annotations:

cec.cilium.io/use-original-source-address: "false"

spec:

services:

- name: kube-apiserver-bgp

namespace: kube-system

listener: kube-apiserver-bgp-listener

resources:

- "@type": type.googleapis.com/envoy.config.listener.v3.Listener

name: kube-apiserver-bgp-listener

filter_chains:

- filters:

- name: envoy.filters.network.tcp_proxy

typed_config:

"@type": type.googleapis.com/envoy.extensions.filters.network.tcp_proxy.v3.TcpProxy

stat_prefix: kube_apiserver_bgp_tcp

cluster: kube-system/kube-apiserver-bgp

idle_timeout: 3600s

- "@type": type.googleapis.com/envoy.config.cluster.v3.Cluster

name: kube-system/kube-apiserver-bgp

type: EDS

connect_timeout: 5s

lb_policy: ROUND_ROBIN

health_checks:

- timeout: 1s

interval: 1s

unhealthy_threshold: 3

healthy_threshold: 1

interval_jitter: 0.1s

no_traffic_interval: 1s

tcp_health_check: {}

outlier_detection:

consecutive_local_origin_failure: 3

split_external_local_origin_errors: true

interval: 5s

base_ejection_time: 30sWalking through it:

- The

cec.cilium.io/use-original-source-address: "false"annotation is mandatory for any CCEC not built by the Ingress or Gateway API controllers. (See Pitfalls.) services:binds the Envoy listener to the Service. This single field both redirects traffic destined for the Service into the listener AND tells Cilium to sync the Service's EndpointSlice into the EDS cluster of the same<ns>/<name>. NobackendServices:needed.- The Listener is a single

tcp_proxyfilter chain. No TLS termination - the apiserver speaks HTTPS and the bytes flow through opaquely.idle_timeout: 3600sbecausekubectl watchand informer streams are long-lived; the default would cut them. - The Cluster is

type: EDS(dynamic - Cilium pushes endpoints),lb_policy: ROUND_ROBIN, and the meaningful part:tcp_health_check: {}connect-only probes every second, mark unhealthy after three failures, mark healthy on the first success.interval_jitter: 0.1s- not100ms, (see Pitfalls again). outlier_detectionejects backends that pass the TCP probe but fail mid-request - belt-and-braces alongside the active checks.

The template also has a feature-flagged STATIC fallback (cluster type: STATIC with endpoints inlined from inventory) for the day when Cilium upstream regresses something on this code path. Default is eds. I haven't needed the fallback yet.

Switch the kubeconfig

The Ansible keycloak_config role generates an OIDC kubeconfig pointing at one of the node IPs by default. Once the BGP API endpoint is up, redirect that one cluster entry:

kubectl config set-cluster clustername-ber-oidc \

--server=https://kube.dev.ber1.clustername.io:6443The certificate-authority-data stays the same (the k3s server CA didn't change - you only added SANs to the cert it serves). The OIDC user block stays the same. Only the server URL changes.

Verify Failover

# 1. Confirm three healthy backends

kubectl -n kube-system exec ds/cilium -c cilium-agent -- cilium-dbg envoy admin config | \

jq '.configs[] | select(."@type" | endswith("EndpointsConfigDump"))

| .dynamic_endpoint_configs[].endpoint_config

| select(.cluster_name == "kube-system/kube-apiserver-bgp")'

# 2. Stop one apiserver

ssh clustername@cat01 'sudo systemctl stop k3s'

# 3. Within ~3s the affected backend goes UNHEALTHY in the dump above.

# kubectl through 10.31.0.80 / kube.dev.ber1.clustername.io keeps responding.

ssh clustername@cat01 'sudo systemctl start k3s'

# 4. Within ~1s the apiserver is HEALTHY again.Operate

A short reference for day 2.

Adding a Control-Plane Node

I basically just add to inventory/hosts.yaml under the k3s_server group and keep the first host unchanged, it's the cluster initializer. Then:

ansible-playbook -i inventory/hosts.yaml k3s.yaml --limit cat01,cat04The cilium phase re-templates kube-apiserver-bgp.yaml.j2, picking up the new IP into the EndpointSlice. CCEC EDS sync pushes the new endpoint to every Envoy. No manual step for HA membership. The k3s phase rolls the joiners with the existing TLS SAN list (the BGP VIP and hostname don't change).

Rotating a Node

kubectl drain <node> --ignore-daemonsets --delete-emptydir-data

kubectl delete node <node>

# remove the node from inventory/hosts.yaml under k3s_server

ansible-playbook -i inventory/hosts.yaml k3s.yaml --tags ciliumWhere to Look When It Breaks

CCEC validation surfaces in agent logs, not on .status - don't waste time on kubectl describe ccec. The four diagnostics that matter:

# 1. Agent's own validation errors (look for "Ignoring invalid CiliumEnvoyConfig JSON")

kubectl logs -n kube-system ds/cilium -c cilium-agent

# 2. What actually made it into Envoy

kubectl exec -n kube-system ds/cilium -c cilium-agent -- cilium-dbg envoy admin config

# 3. Concise cluster list

kubectl exec -n kube-system ds/cilium -c cilium-agent -- cilium-dbg envoy admin clusters

# 4. The load-balancer Writer state - separate code path from the eBPF map

kubectl exec -n kube-system ds/cilium -c cilium-agent -- cilium-dbg statedb services

kubectl exec -n kube-system ds/cilium -c cilium-agent -- cilium-dbg statedb backendsThe last one is the ground truth for what CCEC actually sees, and it'll diverge from the eBPF map when something is wrong in xDS land. Check both.

Pitfalls

The reference for everything called out inline above.

externalTrafficPolicy: Local Silently Empties EDS Endpoints

The headline gotcha. Symptom: curl -k https://10.31.0.80:6443/healthz returns TLS connect error: unexpected eof while reading. Direct curl -k to a node IP returns 401 Unauthorized (apiserver healthy). The CCEC's cluster shows up in cilium-dbg envoy admin clusters as added_via_api: true, but the EndpointsConfigDump for the dynamic cluster is empty:

{

"cluster_name": "kube-system/kube-apiserver-bgp",

"policy": {"overprovisioning_factor": 140}

}No endpoints array. Cilium parsed the CCEC, registered the cluster, but pushed an empty ClusterLoadAssignment. Envoy accepts the connection on the listener, has no upstream to forward to, closes immediately. That's the EOF.

Root cause is in the Cilium 1.19.3 source. The CCEC backend-sync controller calls the LB Writer's SelectBackends for each Service the CCEC binds, with the Frontend argument as nil (pkg/ciliumenvoyconfig/controller.go:369):

newEndpoints = computeLoadAssignments(

svc.Name,

res.ClusterReferences,

svc.PortNames,

bs.writer.SelectBackends(wtxn, bes, svc, nil))Inside DefaultSelectBackends (writer.go:385), the nil Frontend triggers the Local-policy fallback:

onlyLocal = svc.ExtTrafficPolicy == loadbalancer.SVCTrafficPolicyLocalAnd a few lines down the actual filter:

if onlyLocal {

if len(be.NodeName) != 0 && be.NodeName != w.nodeName {

continue

}

...

}Combined with nodeName set on every EndpointSlice entry, every cilium-agent on a non-control-plane node filters out every backend. The per-node Envoy gets an empty cluster.

Switching the Service to externalTrafficPolicy: Cluster flips onlyLocal = false, the filter is skipped entirely, all three backends appear in EDS marked HEALTHY, kubectl through the VIP works.

Cluster is the correct answer here, not a workaround. The usual Local motivations - source IP preservation, one less hop - don't apply: cilium-envoy terminates the inbound TCP and re-opens upstream, so the apiserver sees Envoy's IP regardless. The "extra hop" is microseconds on a 20 Gbit/s LACP fabric. And Cluster is what makes EDS sync work in this code path at all.

protobuf Duration Wants Seconds, Not Milliseconds

Cilium parses CCEC resources[] entries as protobuf JSON. The google.protobuf.Duration field rejects 100ms:

Ignoring invalid CiliumEnvoyConfig JSON: proto: invalid google.protobuf.Duration value "100ms"Use 0.1s. The insidious part: only the affected resource gets rejected. The Listener registers cleanly, the Cluster fails validation, Envoy has a listener with no upstream, connections accept and immediately close. Looks identical to a network problem. Always check kubectl logs -n kube-system ds/cilium -c cilium-agent first.

Every Duration in CCEC resources[] uses <seconds>s form. For example: 0.1s, not 100ms.

cec.cilium.io/use-original-source-address Is Required for Hand-Rolled CCECs

For any CCEC not created by the Ingress or Gateway API controllers - which is to say, anything you write yourself - pin this annotation to "false":

metadata:

annotations:

cec.cilium.io/use-original-source-address: "false"Otherwise Envoy binds the upstream socket to the original client source IP/port. Concurrent kubectl HTTP/2 streams from one client end up with the same 5-tuple toward a single apiserver, the apiserver sees what looks like a duplicate connection, and they tear each other down.

Documented in the upstream Cilium docs under L7-Aware Traffic Management - not on the main CCEC reference page. Easy to miss and impossible to diagnose without the docs; the symptom is "kubectl works for some commands and fails on others, depending on whether they multiplex".

CCEC Validation Surfaces in Agent Logs, Not on .status

The CCEC CR's .status field is empty in 1.19, even when validation rejects the resource. kubectl describe ccec tells you nothing useful. Use:

kubectl logs -n kube-system ds/cilium -c cilium-agentfor the agent's own validation errors.cilium-dbg envoy admin configfor what made it through to Envoy.cilium-dbg statedb servicesand... backendsfor the load-balancer Writer state.

kubeProxyReplacement Migration Order

Don't flip both at once.

- Set

kubeProxyReplacement: truein Cilium values, run the cilium role. Cilium starts programming services in eBPF alongside kube-proxy iptables. Both are active; eBPF wins for new connections. - Set

disable-kube-proxy: truein k3s config, run the k3s role. kube-proxy goes away on the next k3s restart. Services keep working because Cilium has been doing it for a while already.

The reverse order leaves a brief window where neither side is doing service routing.

The bind: address already in use Red Herring

During the migration above, the cilium-agent will log:

listen tcp :31097: bind: address already in useIt's the loadbalancer-healthserver trying to bind the auto-allocated healthCheckNodePort for an eTP=Local Service while k3s's bundled kube-proxy is still using the same port. Resolves itself when k3s restarts with disable-kube-proxy: true. Not a real failure, just transient noise.

(Also irrelevant after switching the kube-apiserver-bgp Service to eTP=Cluster - no healthCheckNodePort is allocated.)

The shape of the lesson: this is a five-line YAML change buried in three CRDs that took the longest to find, the docs don't warn you about it, and the symptom looks like a network problem. The router-as-LB pattern is otherwise solid. Once the Cilium-specific switches are in the right positions, you stop thinking about the data path and the cluster does what every public-cloud reference architecture does, only without the bill (from a cloud provider).